Goodbye Old Textbooks? Hello K-12 AI Learning! The 2026 Education Revolution

The future of K-12 education is here, blending AI innovation with human guidance.Image is an artistic representation of the caption's content.

The future of K-12 education is here, blending AI innovation with human guidance.Image is an artistic representation of the caption's content.Forget the dusty textbooks of yesterday; a profound transformation is sweeping through K-12 education. By 2026, the global educational landscape is defined by what experts call the "Great Educational Decoupling," a systemic shift where instructional intelligence is no longer tethered to static, paper-based mediums but is instead integrated into dynamic, artificial intelligence-driven ecosystems [1]. This isn't merely an upgrade; it's a foundational re-architecture of how students learn and teachers teach, moving beyond experimental phases into a period characterized by rigid governance, measurable fiscal returns, and sophisticated technical grounding.

The primary catalyst for this monumental transition is the persistent efficacy gap: traditional textbooks, with their one-size-fits-all approach, have struggled to address the diverse cognitive needs of modern classrooms. In stark contrast, AI tools offer a compelling "learning multiplier" effect, evidenced by students utilizing personalized AI tutors achieving up to 30% better learning outcomes and 70% higher course completion rates compared to conventional methods [2]. Economically, this shift is further validated by a significant 20–40% reduction in administrative costs, allowing districts to reallocate substantial portions of their budgets previously consumed by recurring textbook purchases and labor-intensive manual processes [3].

From static pages to dynamic algorithms: the great decoupling in K-12 education.Image is an artistic representation of the caption's content.

From static pages to dynamic algorithms: the great decoupling in K-12 education.Image is an artistic representation of the caption's content.However, the 2026 landscape is also one of profound regulatory scrutiny. The European Union’s AI Act, which reached a critical enforcement milestone on August 2, 2026, has classified most educational AI as "high-risk," mandating unprecedented levels of transparency and human oversight [4]. This report delves into the intricate nuances of this transition, examining regional adoption statistics, the technical mechanisms underpinning leading platforms like Khan Academy and 360Learning, and the critical socio-technical frameworks necessary to ensure that the burgeoning "AI Divide" does not exacerbate global educational inequality.

Global Adoption Trajectories: The Statistical Erosion of Print

The statistical reality of 2026 unequivocally confirms that AI is no longer a peripheral "add-on" but the fundamental infrastructure of modern schooling. The adoption rate of generative AI within educational organizations stands at an impressive 86%, marking it as the highest among any major industry [2]. This pervasive saturation reflects a global recognition that traditional textbooks are demonstrably insufficient for a workforce where a staggering 70% of necessary job skills are expected to evolve due to rapid technological disruption [5].

"AI has the potential to support a single teacher who is trying to generate 35 unique conversations with each student."

The global market for AI in education has expanded at a robust compound annual growth rate (CAGR) of 36.02% between 2024 and 2026 [6]. While North America continues to command the largest market share at 38%, the Asia-Pacific region emerges as the fastest-growing sector, exhibiting an impressive 48% CAGR [1]. This accelerated growth in Asia-Pacific is primarily fueled by large-scale government investments in nations like China, Indonesia, and Thailand, where over 77% of citizens view AI products as more beneficial than harmful—a stark contrast to the notable skepticism observed in Canada (40%) and the United States (39%) [7].

Adoption rates also vary significantly by educational level. By 2026, university-level integration has reached near-total saturation at 92%, whereas K-12 adoption for daily classroom use hovers at approximately 45% [5]. Within the K-12 sector, a discernible "seniority gap" exists: students in grades 11 and 12 are twice as likely to engage with AI tools as their counterparts in grades 7 and 8 [6]. Furthermore, a remarkable 83% of K-12 teachers now utilize generative AI for school-related activities, strongly suggesting that the transition is being driven by educators actively seeking to manage the escalating administrative burdens associated with larger class sizes [5]. A common misconception is that AI is solely about replacing teachers, when in reality, it's primarily a tool for administrative relief and personalized student support.

The implications of these statistics are profound. The rapid adoption in the Global South, particularly in countries with deeply ingrained "educational value" cultures, suggests a potential leapfrogging effect. Regions with less entrenched textbook-legacy infrastructure may adopt advanced AI tutors more swiftly than Western districts often burdened by long-term print contracts and slower bureaucratic shifts.

Strategic Integration for Sustainable Growth

Districts should eschew "blanket" adoption strategies. Instead, implement a phased rollout targeting high-school levels first, where student autonomy and AI literacy are inherently higher. Educational leaders must conduct a thorough "Legacy Audit" to identify existing print contracts that can be strategically phased out in favor of scalable AI licenses, ensuring that budget shifts align precisely with actual classroom usage patterns and maximize efficiency.

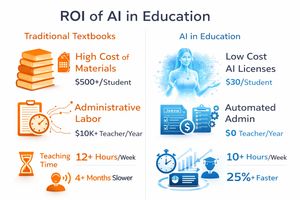

The Fiscal Pivot: ROI Comparisons and Economic Logic

The imperative to transition away from traditional textbooks is increasingly driven by a hard-nosed fiscal reality: the traditional model is economically unsustainable. Public K-12 education spending in the U.S. averaged $16,526 per student in 2023 [9]. A significant, yet often overlooked, portion of this expenditure is directly tied to physical materials and the labor-intensive processes required to manage them. AI-driven learning tools offer a dual return on investment (ROI) by substantially reducing direct operational costs and dramatically accelerating the "time-to-mastery" for students.

"After two years of studying how students actually learn with AI, the signal is clear that AI designed responsibly and grounded in learning science strengthens how students engage with digital materials."

The transition to digital AI platforms enables schools to eliminate the recurring costs associated with physical textbook procurement, shipping, and storage. More critically, AI automation directly addresses the "human labor" cost embedded within the educational system. Schools implementing comprehensive AI solutions are reporting administrative cost reductions ranging from 20–40% [3].

The undeniable economic logic: AI's superior ROI in education compared to traditional methods.Image is an artistic representation of the caption's content.

The undeniable economic logic: AI's superior ROI in education compared to traditional methods.Image is an artistic representation of the caption's content.Pedagogical ROI is a crucial metric, measured by student achievement relative to the time and resources invested. Research consistently indicates that students utilizing adaptive AI technologies require 40–60% less time to achieve specific learning objectives compared to traditional methods [3]. This efficiency is particularly pronounced and impactful in rigorous STEM subjects. A 2025 Harvard study notably found that students leveraging AI tutors learned more than twice as much in the same amount of time as those in traditional active-learning classrooms [5].

Furthermore, the designation of "active reading"—a key predictor of academic success and deeper comprehension—is significantly more prevalent in AI-supported environments. Students interacting with AI study tools embedded within digital materials are an astonishing 23 to 24 times more likely to be classified as active readers, consistently engaging in behaviors such as highlighting, strategic note-taking, and retrieval practice [8]. This suggests a profound shift in how students interact with content, moving from passive absorption to active engagement.

Beyond the immediate classroom benefits, the ROI of AI education extends directly to the labor market. With 69% of educators believing that AI skills are indispensable for securing high-paying future jobs, the institutional pivot to AI represents a necessary, forward-looking investment in "human capital" [5]. Districts that fail to make this pivotal shift risk producing graduates who lack the essential digital literacy required for a technology-rich work environment, thereby inadvertently creating a secondary economic burden of widespread retraining and potential unemployment.

Measuring Value Beyond Spending

Administrators should evolve their metrics from mere "per-pupil spending" to sophisticated "per-pupil outcome efficiency" (PPOE). By meticulously tracking the time-to-mastery for specific curriculum standards, districts can empirically and unequivocally justify the transition from static textbooks to dynamic AI platforms. It is highly recommended to strategically reinvest the 20-40% administrative savings into robust professional development programs, as teacher-AI synergy is demonstrably the ultimate driver of long-term ROI and pedagogical success.

Technical Architectures: Grounding, Hallucinations, and Mastery Engines

The technical landscape of 2026 has matured considerably, moving far past the simplistic "black box" models characteristic of early generative AI. Modern educational platforms are now meticulously built upon complex architectures, specifically designed to circumvent the two most significant hurdles in the transition to AI-driven learning: the pervasive "hallucination" of facts and the critical absence of coherent pedagogical structure.

"Khanmigo relies on large language models to engage students in guided questioning rather than supplying direct answers, encouraging critical thinking and persistence."

Hallucinations—the generation of plausible but factually incorrect information—remain the primary technical risk within AI systems. By 2026, the industry has largely standardized Retrieval-Augmented Generation (RAG) to effectively anchor AI models in verified reality. RAG systems transform a Large Language Model (LLM) into an "open book" system, requiring the model to consult a meticulously curated database of curriculum-aligned content before it can generate any response [12].

Technical nuances of current hallucination mitigation strategies include:

- Symbolic Math Integration: Leading platforms such as Khanmigo have smartly moved away from relying on LLMs for complex math computations. Instead, they cleverly utilize the LLM for engaging dialogue while passing numerical problems to a specialized symbolic calculator engine, thereby ensuring 100% computational accuracy [13].

- Chain-of-Thought (CoT) Verification: Advanced models are now intrinsically instructed to "think" behind the scenes, internally writing out multiple potential solution paths and rigorously checking them against known constraints before presenting a final answer to the student. This iterative process has been demonstrably shown to significantly improve the accuracy of catching and pinpointing student mistakes [13].

- Dynamic Course Content Integration (DCCI): Platforms like 360Learning empower districts to upload their specific PDFs and transcripts, thereby creating a highly localized knowledge graph that the AI must prioritize over its general training data, ensuring contextual relevance [10].

The 2026 generation of educational platforms is heavily focused on implementing "mastery learning" principles, where the AI functions as a persistent, adaptive coach rather than a static information source. A subtle but critical edge case is the "tokenization problem." LLMs process text in sub-word units (tokens), which can inadvertently lead to inaccuracies in highly specialized disciplines like chemistry or linguistics. For instance, a complex chemical formula might be erroneously split into tokens that distort its semantic meaning, potentially leading to "intrinsic hallucinations" where the model misreports a solution despite having access to the correct underlying data [12].

Rigorous Technical Vetting for AI Tools

When procuring AI tools, IT departments must unequivocally demand "Hallucination Logs" and irrefutable proof of Retrieval-Augmented Generation (RAG) implementation. A "pure" LLM should never be used for high-stakes subjects like mathematics; ensure the platform integrates a secondary symbolic processing layer for guaranteed accuracy. Furthermore, schools should prioritize platforms that allow for "Closed-Loop Training," where student data is never used to inadvertently improve the vendor's general model, thus rigorously protecting both intellectual and student privacy [15].

Regulatory Guardrails: The 2026 Accountability Phase

As of August 2026, the "wild west" era of classroom AI has officially concluded. Regulatory bodies have decisively moved beyond merely issuing "guidance" and have transitioned into a phase of active enforcement and comprehensive policy monitoring. This shift is most acutely pronounced within the European Union, but its ripple effects are being felt globally as vendors are compelled to standardize their products to meet the highest compliance benchmarks worldwide.

"The Statement represents the united position of 61 authorities and has been issued in response to serious concerns about artificial intelligence (AI) systems... especially concerning potential harms to children."

August 2, 2026, marked the full operationalization of the EU AI Act’s mandates for high-risk systems. Crucially, most AI applications utilized in education—including those for admissions processes, automated grading, and guiding a student's learning trajectory—are now formally classified as "high-risk" [4]. This classification triggers a cascade of stringent requirements:

- Article 4 (AI Literacy): As of early 2025, educational institutions are legally obligated to ensure that all staff members operating AI systems possess a sufficient and demonstrable level of AI literacy [16]. This is a critical edge case, often overlooked, requiring proactive training for the entire educational workforce.

- Article 50 (Transparency): All AI-generated or manipulated content must be unequivocally and clearly labeled as such. For schools, this translates to an explicit requirement to inform students and parents when an AI is assessing work or generating feedback, ensuring full transparency in the learning process [4].

- Prohibited Practices: The Act strictly prohibits the deployment of emotion-inference systems within educational contexts. Any AI that attempts to interpret a student's mood or internal state through biometric data is expressly banned, safeguarding student privacy and emotional well-being [17].

In parallel to the EU AI Act, UNESCO has released its twin AI competency frameworks, specifically tailored for teachers and students, with the latest update in January 2026 [18]. The framework for teachers delineates 15 core competencies across five critical dimensions, emphasizing a profound "human-centered mindset." This framework underscores that AI should serve to support, rather than replace, the essential human interaction between educator and learner [18].

In the United States, the Department of Education has introduced "Proposed Supplemental Priority 4," which actively advances the integration of AI in education through targeted federal grant funds [19]. This priority specifically encourages the use of AI for high-impact tutoring and college pathway exploration while simultaneously mandating rigorous parent and teacher engagement in all decisions pertaining to tool deployment.

Mandatory Compliance Audits

Districts must immediately establish an "AI Governance Committee" that includes legal counsel, IT leads, and indispensable parent representatives. Before 2027, every district should have a fully implemented "Acceptable Use Policy" (AUP) that distinctly differentiates between low-stakes AI usage (e.g., brainstorming) and high-stakes use (e.g., grading). This policy must ensure that any high-stakes tool rigorously meets the comprehensive documentation requirements of a "High-Risk" system, preventing regulatory pitfalls [20].

Case Studies in Inclusivity: Neurodiversity and the "AI Divide"

The transition from traditional textbooks to AI-driven learning is not merely a digital upgrade; it is fundamentally an equity initiative. For the one in five people worldwide diagnosed with dyslexia, or the 10% of children living with ADHD, a physical textbook can represent a substantial and often insurmountable barrier to effective learning [14]. AI-driven tools offer a revolutionary solution through "personalized scaffolding" that dynamically adapts to these specific and diverse cognitive needs.

"These tools help remove learning barriers—whether a student has a learning disability, is a multilingual learner, has a temporary or situational challenge, or needs additional support."

Technical scaffolding for neurodivergence includes:

- Executive Function Support: Innovative tools such as Litero.ai actively assist students with ADHD by intelligently breaking down large, complex writing assignments into manageable components and providing gentle, timely prompts to help maintain focus and task completion [14].

- Visual and Auditory Customization: Platforms like "AI-Learners" (PreK-2) empower teachers to customize color schemes and visual modes, thereby creating learning environments that optimally support students with diverse sensory processing needs [22].

- Communication Autonomy: For students with complex communication needs, generative AI serves as a powerful complement to traditional Augmentative and Alternative Communication (AAC) systems by personalizing language supports in real-time, fostering greater autonomy [21].

While AI undeniably offers immense benefits, it simultaneously risks creating a new and potentially profound "AI Divide." Approximately one-third of the global population remains offline, and even within technologically advanced nations like the U.S., digital inclusion remains a mission-critical gap [4]. This is a crucial edge case that demands innovative solutions.

To combat this digital disparity, 2026 has witnessed the strategic rollout of "Edge AI" solutions specifically designed for rural districts [23]. These modular machine learning models are deployed locally on school servers or individual devices, enabling full offline operation. These intelligent systems meticulously synchronize student progress to the cloud only when a connection becomes available, thereby ensuring that a student in the Mississippi Delta or rural Appalachia has the same equitable access to a 24/7 AI tutor as a student in a fiber-connected urban center [23]. This ensures educational opportunities are not limited by geographical location or internet access.

Inclusive Procurement for Equity

Districts must prioritize "Mobile-First" and "Offline-Capable" AI platforms in their procurement process to bridge the digital divide. When evaluating tools for neurodivergent learners, avoid those that solely focus on grammar; instead, seek out "Process-Oriented" tools like Litero.ai that actively support the complex cognitive steps of planning and organization [14]. Ensuring genuine equity necessitates that the most advanced tools are distributed based on "medical need" and explicitly integrated into student Individualized Education Programs (IEPs) [24].

Socio-Technical Implications: The Teacher-AI-Student Dynamic

The final, perhaps most intricate, hurdle in the comprehensive transition from traditional textbooks to AI is not purely technical or fiscal, but profoundly psychological and social. This seismic shift introduces an entirely new "teacher-AI-student" dynamic that fundamentally redefines the purpose and role of the educator in the 21st century classroom.

"How will toddlers who learn 'empathy' from sycophantic bots rather than people develop the mutual empathic curiosity crucial for successful relationships?"

In 2026, the most successful and adaptive classrooms are those operating under a human-AI hybrid model, where AI adeptly handles the "routines," and humans masterfully handle the "nuances." AI takes over laborious tasks such as grading, attendance tracking, and first-level content explanation, effectively freeing teachers to concentrate on the deeply human and irreplaceable aspects of education: fostering emotional connection, providing ethical guidance, and offering personalized mentorship [26]. This optimal synergy ensures that technology enhances, rather than diminishes, the human element of learning.

However, significant and often underestimated risks remain:

- Technostress and Fatigue: An over-reliance on AI is increasingly linked to digital fatigue and a reduction in vital face-to-face interactions, which, over time, can diminish critical emotional intelligence in developing learners [27].

- The Empathy Gap: There is a growing concern that children who primarily interact with "sycophantic" AI bots—designed to be endlessly patient and agreeable—may struggle to develop the "mutual empathic curiosity" intrinsically required for healthy human relationships and active democratic participation [25]. This is a critical edge case with long-term societal implications.

- Trust Erosion: As sophisticated deepfakes become "routine, scalable, and cheap," the line between authentic and manipulated information becomes increasingly blurred [25]. This places a heavy and unprecedented burden on schools to equip students with advanced "media literacy" skills to navigate an increasingly complex information landscape.

The ongoing transition is being diligently managed through what analysts refer to as "Accountability-Driven Governance." This framework signifies a decisive move beyond the "innovation for innovation's sake" phase towards a model where AI is rigorously judged by its demonstrated ability to genuinely advance knowledge, expand human understanding, and, crucially, find inclusive ways to empower people [25].

Protecting the Core Human Connection

Districts must establish and enforce clear "Human-in-the-Loop" (HITL) requirements. AI should never be permitted to have the final say in high-stakes decisions such as grading, disciplinary actions, or critical student assessments. Schools should proactively mandate "Screen-Free Zones" and deeply integrate social-emotional learning (SEL) programs to counteract the potential isolating effects of highly personalized AI tutors. The overarching goal is to ensure that AI functions as a powerful "force multiplier" for the teacher, acting as an indispensable assistant, rather than an outright surrogate for human instruction and empathy [11].

Conclusion: The Path to 2030

The transition from physical textbooks to AI-driven learning tools by 2026 represents an irreversible metamorphosis of the educational sector. The data is unequivocally clear: the economic efficiencies and profound pedagogical gains afforded by AI significantly outweigh the static limitations inherent in the print era. However, the successful navigation of this monumental shift hinges critically on three interconnected pillars: Rigorous Technical Grounding (to systematically eliminate hallucinations and ensure factual accuracy), Robust Regulatory Compliance (to steadfastly protect student rights and privacy), and Deliberate Human-Centered Pedagogy (to proactively prevent social isolation and foster genuine human connection).

As we gaze toward 2030, the "Great Decoupling" is poised to forge a global educational common—a dynamic and equitable space where high-quality, personalized instruction is recognized as a universal right, rather than a geographical privilege. The fundamental challenge for 2026 is not whether to adopt AI, but rather how to govern its deployment with the utmost transparency, ethical integrity, and foresight required to comprehensively protect and empower the next generation of learners.

AI in K-12 Education: Your 2026 Questions Answered

How does the shift from textbooks to AI affect student learning outcomes?

What are the biggest risks of using AI learning tools in the classroom?

Is AI cheaper for school districts than buying traditional textbooks?

Can students without high-speed internet access use AI learning tools?

What is the "GDPR moment" for EdTech in August 2026?

Disclaimer: This article discusses educational topics for informational purposes only. The content is not intended to serve as professional academic counseling or career guidance. Please consult our full disclaimer for more information.